Someone at the Numerix-DSP blog made the reasonable suggestion of interpolating the results of an FFT using a parabola. I did some experimenting with this method and found that it was not any worse than my other methods and it seemed less likely to turn out spurious results.

I've moved out the Maxima domain with my calculations and into Common Lisp, but to create the calculation for the parabolic interpolation, Maxima was of some help. First, we define a function for taking three points, and producing the parabola that is defined by that.

Then we will solve for it. We make the observation that the shape of the parabola is the same regardless of shifting it left or right along the x-axis (or index axis, which corresponds to the frequency axis when we multiply by sampling rate). This being the case, we have no need to supply the final indices but can consider our middle point as index 0, the point before it as index -1 and the point after it as index 1. This simplifies the calculation so that we can find the location of the peak relative to the index we are examining and apply the results of this calculation to it. Time to pull in some grade 12 math and differentiate that parabola and find the vertex (see Fig. 1).

|

| Fig. 1. The green dot is approximately where the vertex of this parabola is. The result of this calculation would have x as slightly positive. |

On the basis of this we can produce a common lisp function to perform this calculation.

Note that if the peak is to the left of our high point, we will get a negative x value and if it is to the right, a positive value. It will be on the interval \((-1, 1)\). Some code that goes through the results of an FFT and takes the absolute value as in our previous work and finds local peaks is all we need now. Where there is a local peak of consecutive absolute values \(a\), \(b\), and \(c\) such that \(a, c < b\), do some interpolation using parabola-peak-frequency to get an interpolated amplitude and an index adjustment for the frequency index. Then the calculation for the the frequency becomes

\[(o + x) s/N\]

where o is the index of \(b\), x is the result from parabola-peak-frequency, s is the sample rate, and \(N \)is the length of elements in the FFT.

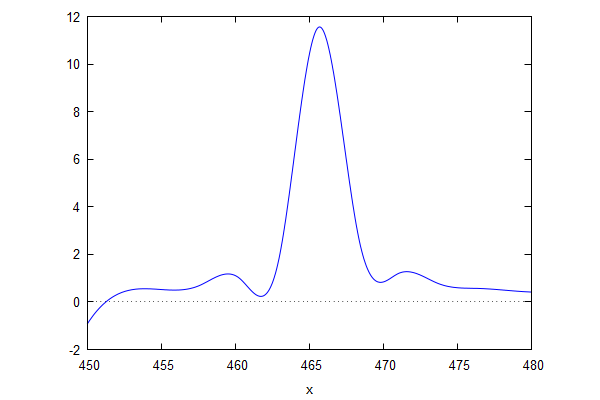

Now, maybe we can start applying some math to some real world problems, like, um, Mozart's Piano Concerto No. 13, movement II? Maybe just a snatch within the first second of it for now.

|

| Fig. 2. Mozart, anyone? |

A general outline on how to proceed with this is to identify all of the local peaks, take the top K highest amplitude data points, interpolate those using the parabolic interpolation above, apply pitch correction (if desired) and midi note calculation. This doesn't address determining pitch durations. Another interesting problem is to try to guess which lower peaks are harmonics of lower pitches. These, and probably other confounding problems, yet await.